Internal documents showed Tesla knew Autopilot limitations could cause accidents but marketed it as self-driving. NHTSA investigation revealed multiple crash warnings ignored.

“Autopilot is designed to assist drivers and requires constant attention. We clearly communicate system limitations to all users.”

From “crazy” to confirmed

The Claim Is Made

This is the moment they called it crazy.

A company claims its technology can drive itself. Regulators and safety advocates express doubt. The company insists critics don't understand innovation. Then the lawsuits start, and internal documents tell a different story.

This is the Tesla Autopilot saga—a textbook example of how corporate confidence, regulatory gaps, and marketing language can collide with real-world consequences.

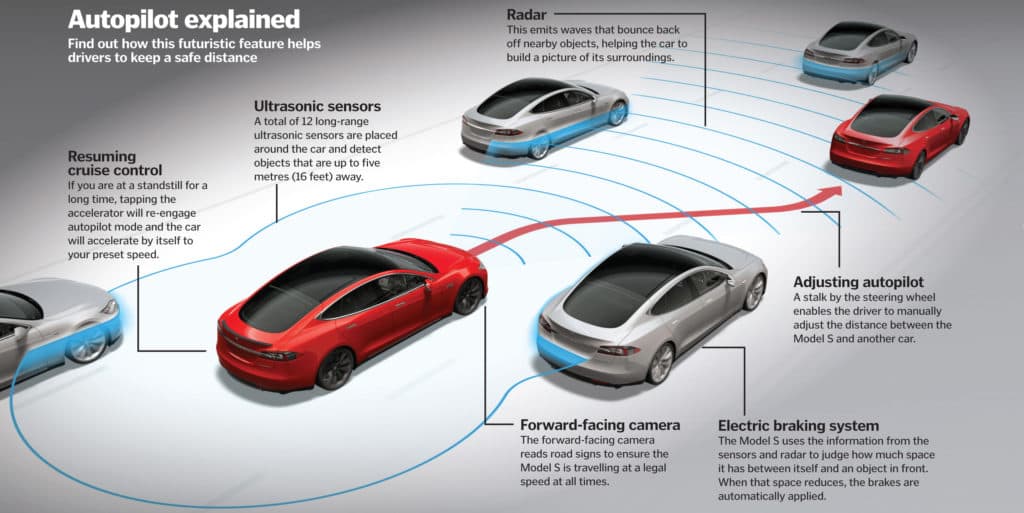

Tesla's Autopilot system emerged as one of the most aggressively marketed driver assistance features in automotive history. Starting around 2015, the company positioned Autopilot not merely as a convenience tool, but as a stepping stone toward full autonomous driving. CEO Elon Musk made sweeping public claims about the technology's capabilities, suggesting that truly self-driving cars were just around the corner. Marketing materials and social media posts blurred the line between what Autopilot actually did and what customers believed it could do.

The catch: internally, Tesla engineers knew the system had serious limitations. The company was aware that Autopilot could fail in low-visibility conditions, struggled with certain road markings, and couldn't reliably recognize stationary objects. Despite this knowledge, Tesla continued marketing the feature as cutting-edge autonomy, often downplaying the extent of driver monitoring still required.

When accident reports began mounting—including fatal crashes where Autopilot was engaged—Tesla's official position was consistent: driver error. The company argued that Autopilot was designed as a driver assistance system, not an autonomous vehicle, and that users who misunderstood its limitations were responsible for accidents. In many cases, the company pointed out that drivers had been warned to keep their hands on the wheel and their attention on the road.

The problem was this narrative didn't match what internal evidence suggested. As NHTSA (the National Highway Traffic Safety Administration) investigation escalated, a pattern emerged: Tesla had documented concerns about Autopilot's shortcomings in internal communications, yet had not significantly altered how the feature was presented to consumers. Multiple crash reports and safety warnings that might have prompted faster corrective action had apparently been de-prioritized.

The investigation revealed something harder to dismiss than corporate denial: a discrepancy between what Tesla knew about its product and what it told the public. This wasn't speculation or conspiracy—it was documented in company records. The evidence showed that Tesla had identified specific failure modes and safety gaps, yet continued to market Autopilot in ways that encouraged overconfidence in the system's abilities.

Tesla eventually made software updates and refined its approach, but the damage to public trust was already done. More importantly, the episode exposed a regulatory vulnerability: there was no clear mechanism to catch this kind of gap between internal knowledge and external claims before lives were lost.

This matters because it defines the frontier of corporate accountability in the age of artificial intelligence and automation. When companies are racing to deploy experimental technology, the gap between what they know and what they say becomes a critical safety issue. The Tesla case demonstrates that innovation doesn't exempt companies from transparency, and that marketing language matters when lives depend on understanding a product's real limitations.

The lesson isn't that autonomous technology is inherently dangerous. It's that the public deserves to know what engineers already know.

Get the 5 biggest receipts every week, straight to your inbox — plus an exclusive PDF: The Top 10 Conspiracy Theories Proven True in 2025-2026. No spam. No agenda. Just the papers they couldn't hide.

You just read "Tesla Autopilot System Had Known Fatal Flaws Company Downpla…". We send ones like this every week.

No one's said anything yet. Be the first to drop your take.